How to Design Fail‑Safe Escalation Paths for Automated Resolutions

Designing fail-safe escalation paths ensures customers never get stuck in limbo during automated resolutions. Focus on clear policies, context preservation, and SLA-aware routing to enhance escalation quality and prevent hidden risks.

Containment metrics can look healthy while customers quietly stall at the handoff. You see rising “automation success,” yet complex cases bounce between tools, lose context, and age in queues. We’ll walk you through how to design escalation paths that never leave a customer in limbo and never leave your team guessing.

This isn’t about throwing more people at the problem. It’s about treating escalations as a product with requirements, SLOs, and controls. We’ll discuss the specific ways escalation design fails, which signals predict trouble, and a practical framework—policy thresholds, context snapshots, SLA-aware routing, and compensating controls—that you can implement without a risky overhaul.

Key Takeaways:

Treat escalation quality as a first-class objective alongside containment

Encode policies and thresholds as rules so exceptions escalate consistently

Preserve a complete context snapshot to eliminate rediscovery and repetition

Instrument SLOs for time to human, handoff success, and re-escalation loops

Use SLA-aware routing, fallbacks, and compensating controls to prevent dead ends

Guarantee idempotent writebacks and audit logs to reduce risk and rework

When Containment Hides Escalation Failures

Containment without reliable escalation design creates hidden risk. Automations suppress complexity while edge cases stall, customers repeat themselves, and auditors find gaps later. The fix is explicit: define when automation must yield, preserve context, and enforce time-bound routing to the right human. A failed payment dispute at midnight shouldn’t wait for business hours.

Why containment without escalation discipline backfires

Containment shines on dashboards but can quietly produce aged exceptions and silent failures. When a bot asks for more data and then stalls without a timer, customers try again, abandon, or call—often with rising frustration. Meanwhile, your team sees “contained” sessions, not the backlog forming in the shadows.

The mismatch is structural: most automation paths are optimized for the happy path, not the exit ramps. If you don’t formalize objective triggers and time bounds, the system can’t tell when to stop trying and escalate. Treat escalation quality like a product requirement. Define thresholds, SLOs, and a minimum context package so the handoff is both timely and complete.

Containment should remove trivial work and expose complexity fast:

Require a time-to-human SLO for high-risk paths

Trigger escalation on silence or retried failures, not just explicit errors

What is a fail safe escalation path and why does it matter?

A fail safe path guarantees the right human sees the right case, with the right context, within a defined window. That path includes objective triggers, role-based routing, channel fallbacks, retries, and compensating controls that prevent blind alleys. If a step fails transiently, the system recovers without duplicating actions or losing evidence.

This matters because escalation breaks carry outsized cost: churn, regulatory exposure, and rework. A fail safe design sets clear ownership and makes success observable. You should know, within minutes, that a critical dispute is with an accountable owner who has full history and can act immediately. That confidence reduces firefighting and shortens time to resolution.

Elements of a fail safe path you can standardize:

Objective triggers and thresholds tied to risk tiers

SLA timers with automatic fallbacks and acknowledgements

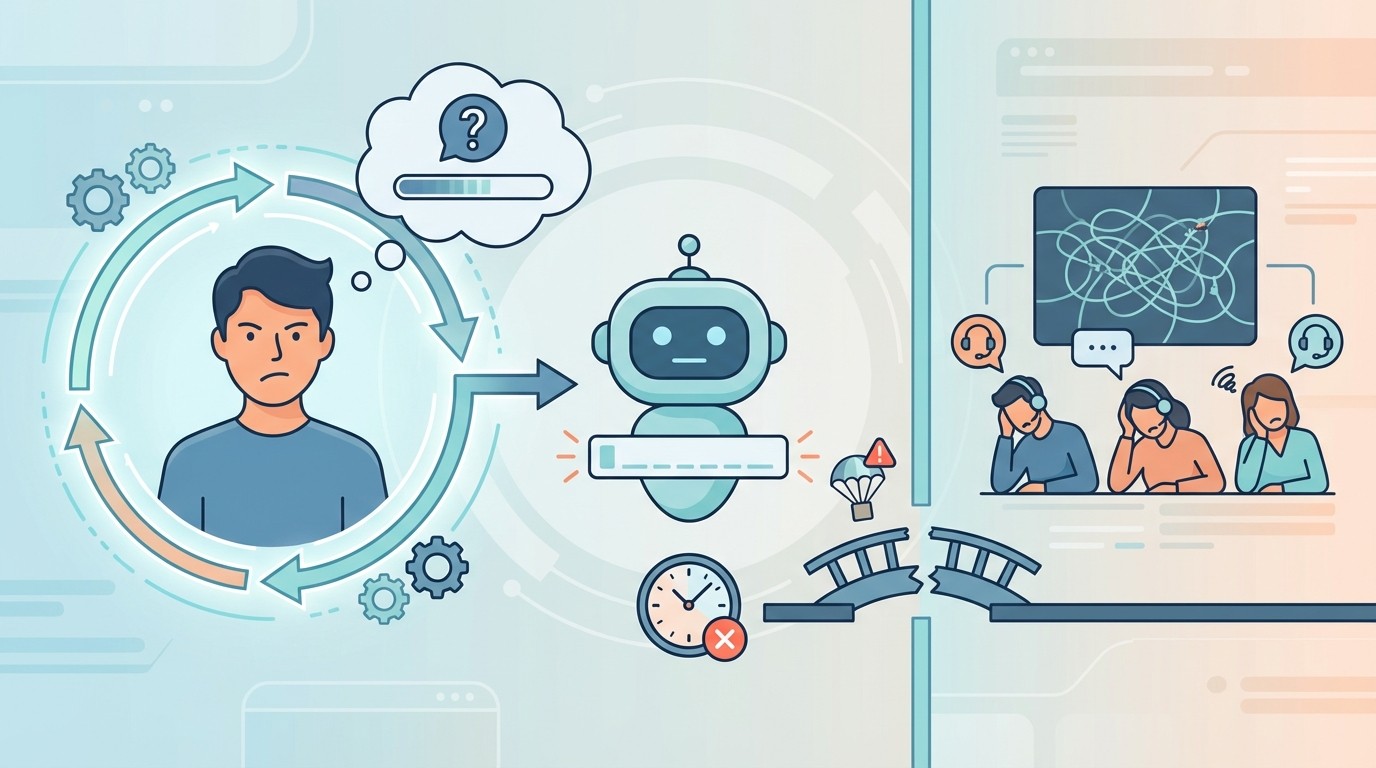

The hidden failure modes in AI handoffs

Most escalation failures aren’t exotic—they happen at the handoff. Context drops when transcripts, structured inputs, and attempted writebacks aren’t packaged together. Messages misroute because consent or channel preferences aren’t respected. Approvals stall when no timer owns the next move. These are design gaps, not agent performance problems.

Preserve the full story: transcript, validations, error payloads, consent artifacts, and idempotent identifiers. Pass it as one case snapshot into the human’s system of action. Add channel fallbacks and retries so transient failures don’t duplicate work. This aligns with guidance on why AI often fails at the handoff, not the automation, and it’s directly measurable through handoff success rate and re-escalation loops.

If you want help pressure-testing your paths, we can walk your team through a quick review and tabletop exercise. Book a 30-Minute Design Review.

Escalations Are a Design Problem, Not a Staffing Problem

Escalations go wrong when rules live in scripts and tribal knowledge. Encoding policy in the workflow engine makes decisions consistent and auditable, while freeing people to handle true exceptions. The key is clarity on thresholds, context packaging, and writebacks so escalations shrink in number and improve in quality.

What traditional approaches miss about thresholds and policy encoding

It’s tempting to “just add headcount” when escalations spike, but unclear thresholds defeat any staffing plan. If eligibility, risk levels, and approval rules live across playbooks and macros, two agents will take two different paths. That variability creates errors and rework, even with the best training.

Model policy in the engine as data: eligibility rules, amounts, dates, and approval tiers with clear conditions. When policy changes, update rules once and propagate everywhere. Routine paths then resolve automatically, and exceptions escalate with evidence. This is faster for customers, safer for compliance, and far easier to audit later.

Context, not just channel choice, determines success

Channel mapping is table stakes. What determines whether an escalation succeeds is context preservation. Agents need to see transcript, structured inputs, validation results, attempted writebacks, and any downstream error payloads. If they start at discovery, not context, you’ve already added unnecessary minutes and risk.

Deliver a single context snapshot to the human’s system of action. Make ownership, next step, and SLO visible the moment it lands. This eliminates rediscovery, reduces repetition, and shortens the path to resolution. It also enables tiering models—outlined in resources like how to build a tiered troubleshooting framework—to work as intended because each tier receives the right payload.

Why writebacks and audit shift escalation risk

Many escalations exist because systems can’t close the loop reliably. When outcomes sync back with idempotent writebacks and retries, routine cases disappear from queues. For the remaining exceptions, a complete audit trail with timestamps, consent, and decision logs reduces regulatory risk and accelerates reviews.

Treat writeback reliability and evidence capture as controls, not conveniences. If a step fails, retries should run automatically with backoff, and failures should include a precise error payload in the case snapshot. That combination shrinks the escalation surface area and improves quality when a human does need to step in.

The Measurable Cost of Bad Escalations

Bad escalations waste time, lose revenue, and increase risk. You can quantify this with a small set of SLOs and a simple model for rediscovery and rework. When you track the data, the cost becomes visible—and fixable—within a single quarter.

The SLOs that predict churn and regulatory exposure

A few SLOs forecast trouble before customers churn or auditors call. For high-risk disputes, target time to human under two minutes. Track successful handoff rate above 95 percent, defined as “human receives case with complete context and acknowledges ownership.” Monitor re-escalation loops, abandoned escalations, and near-miss policy breaches.

These measures map well to incident practices—see SLO patterns from IT incident management—and translate cleanly to customer operations. When these SLOs slide, complaints rise and remediation costs follow. Put them on the same dashboard as containment so you see the full picture, not just the easy wins.

How to quantify lost revenue and rework from loops

Loops create measurable waste. Start with rediscovery time—the minutes an agent spends reconstructing context. Add duplicate outreach and manual reconciliation when writebacks fail. Multiply by volume. Even a five-minute rediscovery penalty at scale becomes days of lost throughput monthly.

Add opportunity costs: delayed cash flow, broken promises to pay, and compliance penalties. Then compare pre- and post-redesign numbers for handoff success, first contact resolution, and writeback reliability. The difference is your return on escalation quality. It’s common to see both cycle time and unit cost drop once context and SLOs are enforced.

What metrics should you track to validate escalation health?

Measure frequency by reason codes and time-to-trigger from first failure. Track delivery success by channel, latency of policy-based approvals, and containment versus re-escalation rates. Tie these to business outcomes such as completed payments, verified KYC, or cleared flags.

Instrument every hop with telemetry so you can trace failures to a component, not a person. Then fix root causes—policy gaps, brittle adapters, missing timeouts—in the workflow. A focused metric set helps you upgrade the path, not pile on ad hoc processes that mask the problem.

Still dealing with rediscovery loops and aged exceptions? We’ve assembled a short checklist your team can use in weekly reviews. Get the Escalation Health Checklist.

When Automation Traps a Customer, Everyone Loses

Automation failures feel personal to customers and stressful to teams. The bot asks for data, the customer complies, and then nothing happens. The fix is operational discipline: timers, fallbacks, visibility, and evidence. Build these in, and you avoid cancellations, complaints, and fire drills.

The 3am dispute that never reaches a human

Picture a high-risk dispute submitted at 3am. The bot requests more data, the customer complies, and the flow stalls. No timer starts, no fallback channel triggers, and no human is paged. By morning, the customer cancels, and your team faces a complaint with a clock already ticking.

Time-box critical paths. Escalate on silence. Route to duty teams with pager-grade alerts when risk thresholds hit, and require acknowledgement within the SLO. If a step fails, use channel fallbacks and log the attempted actions. This isn’t extra process—it’s the guardrail that protects customers and your team.

Why do frontline teams distrust black box escalations?

Frontline teams lose trust when escalations arrive thin on context or arrive late. They see outcomes slip, and they overcorrect by bypassing automation. That creates a shadow process and erodes all the gains you’ve made elsewhere.

Build visibility into the handoff. Show exactly why a case escalated, which rules applied, and what the automation attempted. Provide one-click access to the full history so humans start at context, not discovery. Guidance on handoff clarity, like insights from why AI fails at the handoff, reinforces this point: transparency speeds resolution.

How leadership experiences audit surprises

Leaders get blindsided when evidence is scattered. An auditor asks for consent and decision logs, and teams scramble across tools. Every minute wasted retrieving artifacts is a minute not spent helping customers—and a risk exposure you didn’t need.

Centralize the audit record. Store timestamps, identities, approvals, and idempotency keys in one place and make them exportable. Rehearse evidence retrieval quarterly. A documented approach, similar to the governance patterns in an escalation matrix overview, turns audits from fire drills into routine reviews.

A Practical Framework for Fail Safe Escalations

A reliable escalation design has four pillars: objective triggers, context preservation, SLA-aware routing, and compensating controls. Implement them as rules and services, then test with simulations before you touch production. We’ll walk you through each pillar with practical detail your team can adopt immediately.

Objective triggers and decision thresholds

Define clear, testable triggers. Examples include payment retries exhausted, sentiment crossing a threshold, a dispute flag set, or a downstream error class that signals risk. Pair each trigger with confidence and risk thresholds that determine whether to continue automation, request approval, or escalate.

Document these rules in a policy engine and review quarterly with risk, operations, and legal. Simulate common breaks and near misses before rollout. Treat the matrix of triggers, tiers, and owners as living documentation, aligned with tiering patterns found in operations and workflow guidance such as how to configure automated escalations in permit workflows.

Preserve context across every hop

Persist a complete context snapshot at each decision point. Include transcript, structured inputs, verification results, attempted writebacks, error payloads, and consent artifacts. Attach idempotent identifiers so retries never duplicate actions. Pass this snapshot as a single package to humans, queues, or downstream systems.

Consistency in schema and identifiers eliminates rediscovery and prevents customers from repeating information. It also makes your telemetry actionable because every event references the same case key. When an exception routes to a human, they should see the full history and the next recommended action immediately.

SLA aware routing with time and event fallbacks

Route by severity and role with timers that enforce action. Critical cases should page on-call by SMS or push; routine approvals can queue for business hours with email. Start a timer on every escalation and require acknowledgement. If a case sits idle beyond the SLA, auto-escalate to the next tier and notify owners.

Add delivery fallbacks and dead-letter queues for channel or system failures. Emit ownership transfer logs so you can prove custody throughout the process. These patterns mirror the reliability practices that keep incident response tight and translate well to customer escalations.

Which compensating controls prevent blind alleys?

Compensating controls catch misroutes and unexpected errors. Add auto-rollback for approvals that time out. Use secondary channels when the primary fails. Provide manual override for flagged risks with clear logging. Pause automation when downstream systems degrade and surface a banner to agents so everyone understands the current constraints.

Pair controls with dashboards that visualize stalled cases and loop detection. That way, teams fix the path, not just the ticket. You can also codify roles and tiers informed by an escalation matrix reference, ensuring each control has an accountable owner.

How RadMedia Makes Escalations Fail Safe

RadMedia approaches escalation as part of closed-loop resolution, not an afterthought. Context, timers, fallbacks, and writebacks are baked into the same engine that runs outreach and in-message self-service. That means fewer exceptions, faster handoffs, and complete audit trails—without asking your team to wire it all together.

Escalation with context baked in

RadMedia packages every attempted action, validation, and message into a case snapshot. When an exception triggers, agents receive the full story inside their system of action, not a ticket number and a mystery. They see transcript, structured inputs, verification results, attempted writebacks, and error payloads.

This removes rediscovery work and reduces customer repetition. It also ties directly to audit exports with timestamps, consent events, and decision logs—reducing regulatory risk while improving first contact resolution. In short, agents start at context, not discovery, and cases close faster.

Autopilot exceptions, throttles, and SLO monitors

The RadMedia Autopilot Workflow Engine executes rules, advances based on actions or timers, and monitors SLOs such as time to human and handoff success rate. When downstream systems degrade, it throttles requests and switches to fallbacks. When timers expire, it escalates with acknowledgements, notifies owners, and updates custody logs.

Teams see dashboards for stalled cases and loop detection, turning firefighting into systematic improvement. These patterns align with SLO-based alerting in mature ops practices, like the approaches described in incident management SLO guidance, but applied to customer escalations.

Writeback guarantees and audit evidence

RadMedia’s writeback guarantees keep systems synchronized. Idempotent keys and retries protect consistency so a transient network issue never duplicates actions. Each decision and consent event is logged and exportable to your SIEM or data lake.

This reduces manual wrap-up, keeps records accurate, and provides ready-to-use evidence for audits and disputes. Many “escalations” disappear when outcomes reliably write back; the rest move faster because the evidence is complete and immediately available.

How does RadMedia route across channels and approvals?

RadMedia sequences SMS, email, and WhatsApp based on consent and responsiveness. Critical cases page via SMS and push; routine approvals route through email with embedded summaries. When approvals are required, RadMedia attaches risk summaries and captures sign-off inside the message.

Fallbacks handle delivery failures, and SLA timers enforce acknowledgement and ownership transfer. Exceptions escalate with full context to the right tier—no portal detours or manual reconciliation. If you’d like to see how this maps to your workflows, we’re happy to walk you through a tailored example. Schedule a Working Session.

Conclusion

Containment should never bury complexity. When you treat escalations as a design problem—encode policy as rules, preserve context, enforce SLOs, and guarantee writebacks—you reduce cost and risk while improving outcomes. Start with one high-volume path. Map the triggers and thresholds, define the context snapshot, and add timers plus fallbacks. Small, disciplined changes create durable reliability. RadMedia can help you get there without burdening your team.